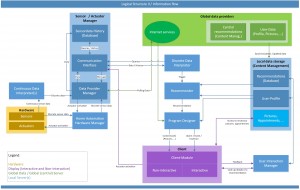

In close collaboration of the University of Augsburg and the University of Applied Sciences Augsburg, an architecture for the CARE system (see picture) has been developed, which is explained below:

At first sensor data (of the environment and the user) is recorded and processed (into usable data). This data will be saved and is, from now on, accessible over the “Sensor / Actuator Manager”. The data will be interpreted by the “Discrete Data Interpreter” and in form of triggers (e.g. a person sat one hour without moving) handed to the “Recommender”. With access to a user-specific-profile (“User-Profile”), a “Recommendations-Database”, internet services and the trigger, person and action specific recommendations will be generated and transfered to the “Program-Designer”. That module generates a program from the given recommendations and personal data (“User-Data”), which will then be shown on the clients (e.g. digital picture frames, tablets, TVs, ..). Optional there is the possibility to activate different actuators (like sound or light) to improve the presentation of a recommendation, which leads to better recognition of the user. If the user wants to give a feedback or look up details to recommendations, he has the possibility to do so with an interactive device (such as tablets, laptops, …). This data will then be analysed and saved for future recommendations by the “User Interaction Manager”.

At first sensor data (of the environment and the user) is recorded and processed (into usable data). This data will be saved and is, from now on, accessible over the “Sensor / Actuator Manager”. The data will be interpreted by the “Discrete Data Interpreter” and in form of triggers (e.g. a person sat one hour without moving) handed to the “Recommender”. With access to a user-specific-profile (“User-Profile”), a “Recommendations-Database”, internet services and the trigger, person and action specific recommendations will be generated and transfered to the “Program-Designer”. That module generates a program from the given recommendations and personal data (“User-Data”), which will then be shown on the clients (e.g. digital picture frames, tablets, TVs, ..). Optional there is the possibility to activate different actuators (like sound or light) to improve the presentation of a recommendation, which leads to better recognition of the user. If the user wants to give a feedback or look up details to recommendations, he has the possibility to do so with an interactive device (such as tablets, laptops, …). This data will then be analysed and saved for future recommendations by the “User Interaction Manager”.